Aerospace Human Factors and AI [part 6/6]

Future Directions and Integration Strategies for HF and AI in Aviation organisations.

The success of AI in aerospace organisations relies on a strategic approach that seamlessly integrates Human Factors with AI.

My focus is on AI for Business, including activities that involve airworthiness decisions.

While airborne AI-based systems will undergo rigorous requirements to achieve certification, which is not the focus of today’s article, it is worth noting that EASA also plans to integrate similar requirements within all the domains of the Basic Regulation.

This is the sixth and final article in the six-part series on Human Factors (HF) and AI, concentrating specifically on future directions and integration strategies for HF and AI in aviation organisations:

Part 1 - Introduction to Aviation Human Factors (HF)

Part 2 - The Relationship Between Human-Centred AI (HCAI) and Aviation Human Factors

Part 3 - AI's positive Impact on Aviation Human Factors

Part 4 - Emerging Human Factors Challenges Due to AI Adoption in Aviation

Part 5 - Understanding EASA's Building Block #3: A Deep Dive into Regulatory Compliance

Part 6 - Future Directions and Integration Strategies for HF and AI in Aviation

Let me share today:

My Strategic approaches to merging Aerospace Human Factors with AI

Human Centered AI

Change Management

Organisational Governance

AI Culture Readiness

Conclusions

Let’s dive in! 🤿

My Strategic Approaches to Merging Human Factors with AI

If you ask me what my main focus would be when considering the integration of Aviation Human Factors with AI, I would highlight four strategic approaches:

Human-Centred AI

Change Management

Organisational Governance

AI Culture Readiness

Let me summarise the key requirements for each of these.

Human-Centered AI

Human-Centred AI places the needs, capabilities, and limitations of humans at the forefront of AI development and implementation.

Human-Centred AI ensures that AI systems are designed to augment human performance rather than replace it.

By prioritising user-friendly interfaces and intuitive interactions, Human-Centred AI helps reduce cognitive load and potential errors, ultimately enhancing overall safety and efficiency.

In the article “Making positive impact with Human Centered AI (HCAI) in Aerospace” I introduced the following key aspects of HCAI to enhance human capabilities and well-being:

User-Centric Design: Developing AI systems with a strong focus on the end-user's experience, needs, and feedback.

Ethical Considerations: Embedding ethical principles in the AI development process to address issues like fairness, privacy, transparency, accountability, and avoiding bias.

Empowerment: Designing AI to augment human abilities, support decision-making, and empower users, rather than replacing or diminishing human roles and capabilities.

Transparency and Explainability: Making AI systems transparent, with decisions that are understandable and explainable to users.

Safety and Reliability: Ensuring AI systems are safe, secure, and reliable, minimizing risks to individuals and society, and enabling users to have confidence in the technology.

Collaborative Development: Involving stakeholders, including potential users and different types of experts in the development process to ensure the technology is aligned with human values and societal norms.

Regulatory Compliance: Adhering to legal and regulatory frameworks designed to protect individual rights and promote public good.

Change Management

Successful integration of AI within aviation organisations requires a robust change management strategy.

As you might not be ready for change.

Do you know that studies consistently show that between 50% and 70% of major organisational changes fail due to poor planning, lack of preparation, and insufficient consideration of readiness and the human aspect of change?

Change management is the process of preparing, planning, implementing, and monitoring changes to an organisation's processes, systems, or structures.

The change management process consists of various steps, starting with the Change Impact and Readiness Assessment. As AI is no different from any other change.

Access the below articles if you want to know more:

Organisational Governance

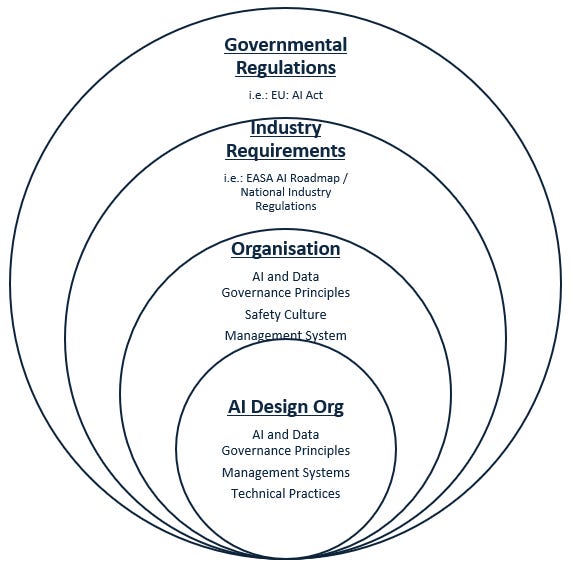

As introduced in the article “The New ISO 42001 Standard: A Leap Forward in AI Governance”, placing the human at the centre, the multilayered governance framework can be exemplified as follows:

Strong organisational governance is essential for the effective and ethical deployment of AI in aviation.

This involves establishing policies, standards, and frameworks to guide AI development and use.

Governance ensures that AI applications align with organisational values, regulatory requirements, and safety standards.

Key components include:

Ethical Guidelines: Defining ethical principles to govern AI development and usage, ensuring fairness, transparency, and accountability.

Compliance and Standards: Adhering to industry standards and regulatory requirements, such as those set by EASA and other relevant bodies.

Risk Management: Identifying, assessing, and mitigating risks associated with AI implementation to protect safety and security.

Performance Metrics: Establishing metrics to evaluate the effectiveness and efficiency of AI systems, ensuring they meet organisational objectives and improve operational performance.

AI Culture Readiness

Cultivating a strong organisational AI culture is essential for the successful integration and sustainable use of AI in aviation.

Cultural change and adaptation take time, but the benefits of a well-developed AI culture are substantial.

This involves:

Leadership Commitment: Leaders must actively champion AI initiatives and demonstrate their commitment through actions and decisions.

Vision and Values: Clearly articulate the vision for AI integration and align it with the organisation's core values.

Open Mindset: Encourage an open and flexible mindset among employees, where innovation and experimentation with AI are welcomed and mistakes are viewed as learning opportunities.

Conclusions

By combining my background with my knowledge and expertise in AI, my proposal is to adopt the following approaches so aviation organisations can effectively integrate Human Factors with AI, fostering a safe, efficient, and human-centric operational environment:

Human Centered AI

Change Management

Organisational Governanace

AI Organisational Culture Readiness

However, with a risk-based mindset and safety as our priority, I advocate for a progressive yet cautious approach to introducing this disruptive technology within organisations.

Stay tuned for the following article, which will summarise the key takeaways after navigating the six-part series on Human Factors and AI.

That's all for today.

See you next week 👋

Disclaimer: The information provided in this newsletter and related resources is intended for informational and educational purposes only. It reflects both researched facts and my personal views. It does not constitute professional advice. Any actions taken based on the content of this newsletter are at the reader's discretion.