Building Trustworthiness AI in Aerospace

Exploring AI Trustworthiness in Aerospace, emphasising EASA Concept Paper guidance for enhancing safety, ethics, and transparency in AI applications.

In today’s article I navigate the complexity of AI Trustworthiness in Aerospace.

My compass? The "EASA Concept Paper: guidance for Level 1 & 2 machine learning applications".

But let’s start from the beginning. What is Trustworthiness in AI?

Trustworthiness in the AI field refers to the assurance that AI systems perform reliably, safely, and ethically, producing consistent and fair outcomes.

It contains several key principles that aim to make AI systems more secure, private, transparent, and accountable, ultimately building user and trust in AI technologies.

The European Union has identified the following requirements for trustworthy AI:

Human agency and oversight: AI systems should enhance, not diminish, human autonomy and decision-making capabilities. They should also include effective oversight mechanisms to ensure they work as intended.

Technical robustness and safety: AI should be secure, reliable, and robust enough to deal with errors or inconsistencies during all life cycle phases.

Privacy and data governance: Personal data collected by AI systems should be securely managed and processed, respecting privacy and data protection rights.

Transparency: The data, system, and AI decision-making processes should be understandable to users and stakeholders. This includes explainability of AI system outputs and decisions.

Diversity, non-discrimination, and fairness: AI systems should consider diverse human abilities, skills, and requirements, and ensure equal and fair treatment.

Societal and environmental well-being: AI systems should be used to enhance positive social change, sustainability, and ecological responsibility.

Accountability: Mechanisms should be put in place to ensure responsibility and accountability for AI systems and their outcomes.

But how are the above aspects being adopted within the Aerospace industry?

Today I provide an overview of what the EASA Concept Paper says about the Trustworthiness Analysis Objectives and Anticipated Means of Compliance.

I cover:

Introduction to the Trustworthiness aspects in Aerospace

The EASA’s Trustworthiness analysis: Objectives and AMCs

Conclusions

Let’s dive in! 🤿

Trustworthiness aspects in Aerospace

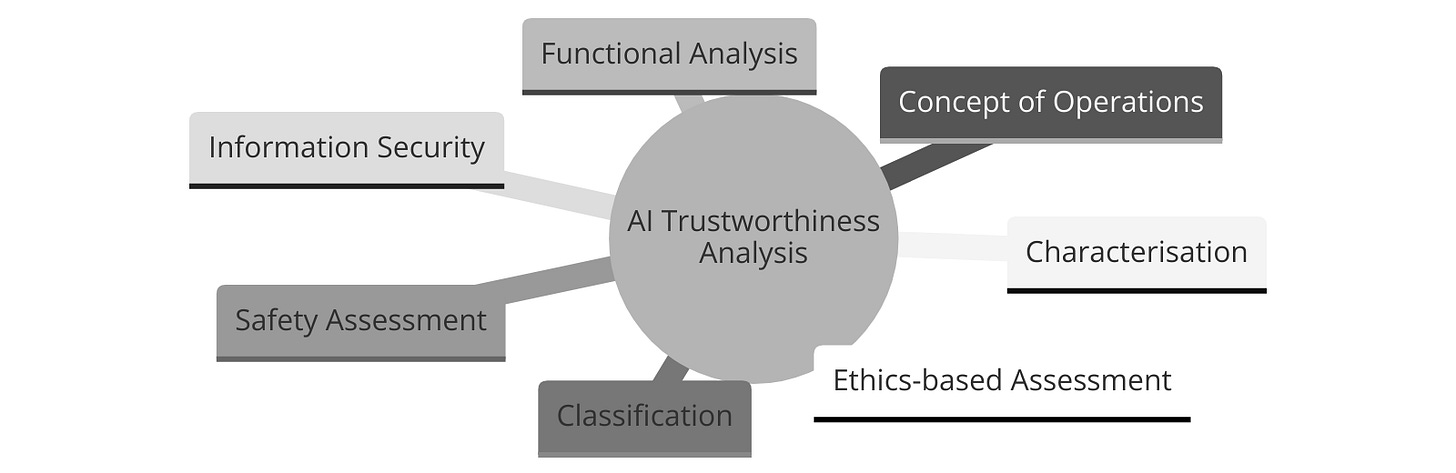

As introduced in the article The Regulatory Landscape of AI in Aerospace, Trustworthiness Analysis is Block #1.

The concept paper introduces Objectives and Anticipated Means of Compliance addressing the following aspects regards the AI application:

The EASA’s Trustworthiness Analysis: Objectives and AMCs

Characterisation of the AI application

The aim is to ensure a comprehensive understanding of the interaction between end-users and AI-based systems.

The Objectives and AMC serve to promote a thorough and precise characterisation of the human-AI relationship, ensuring clarity in responsibilities and expectations, which is crucial for maintaining safety and reliability.

Objective CO-01: The applicant should identify the list of end users that are intended to interact with the AI-based system, together with their roles, their responsibilities (including indication of the level of teaming with the AI-based system, i.e. none, cooperation, collaboration) and expected expertise (including assumptions made on the level of training, qualification and skills).

Objective CO-02: For each end user, the applicant should identify which goals and associated high-level tasks are intended to be performed in interaction with the AI-based system.

Objective CO-03: The applicant should determine the AI-based system taking into account domain-specific definitions of ‘system’.

Concept of operations for the AI application

These Objectives are aimed at ensuring that the AI-based systems are designed with a clear understanding of their operational context and user interactions, which is crucial for their safe and effective implementation.

Objective CO-04: The applicant should define and document the ConOps for the AI-based system, including the task allocation pattern between the end user(s) and the AI-based system. A focus should be put on the definition of the OD and on the capture of specific operational limitations and assumptions.

Objective CO-05: The applicant should document how end users’ inputs are collected and accounted for in the development of the AI-based system.

Functional analysis of the AI-based system

The intent of the Functional Analysis is breaking down the system's operations into individual functions and further decomposing these functions down to the most granular level. The purpose is to understand precisely how the system works at every level and how its various functions are distributed and interconnected.

This analysis helps in identifying and documenting each specific function of the AI-based system, allowing for a thorough understanding of its capabilities, limitations, and the interrelation between its various components. It's a critical step to ensure that the system's design and operation are fully understood and can be safely integrated.

Objective CO-06: The applicant should perform a functional analysis of the system, as well as a functional decomposition and allocation down to the lowest level.

Classification of the AI application

The intent of the Classification of the AI application is for the applicant to categorise the AI-based system according to the defined levels of automation and human-AI interaction.

Each level describes the degree of automation and the extent of the end user's authority over the system.

Objective CL-01: The applicant should classify the AI-based system, based on the levels presented in Table 2, with adequate justifications.

Safety assessment of ML applications

The intent of the Safety assessment of ML applications is for the applicant to conduct a thorough safety evaluation for all AI-based systems and subsystems.

This safety assessment is to determine and manage the specific risks associated with AI/ML technology. This includes defining the Development Assurance Level (DAL) or Software Assurance Level (SWAL) appropriate for each AI/ML constituent, ensuring rigorous verification standards that are compliant with existing rules and guidelines.

Objective SA-01: The applicant should perform a safety (support) assessment for all AI-based (sub)systems, identifying and addressing specificities introduced by AI/ML usage.

Objective SA-02: The applicant should identify which data needs to be recorded for the purpose of supporting the continuous safety assessment.

Objective SA-03: In preparation of the continuous safety assessment, the applicant should define metrics, target values, thresholds and evaluation periods to guarantee that design assumptions hold.

Information Security Objectives

The intent of the Information Security Objectives for AI/ML applications is to ensure that applicants identify, address, and mitigate any information security risks related to AI/ML that could impact safety.

Objective IS-01: For each AI-based (sub)system and its data sets, the applicant should identify those information security risks with an impact on safety, identifying and addressing specific threats introduced by AI/ML usage.

Objective IS-02: The applicant should document a mitigation approach to address the identified AI/ML-specific information security risk.

Objective IS-03: The applicant should validate and verify the effectiveness of the security controls introduced to mitigate the identified AI/ML-specific information security risks to an acceptable level.

Ethics-Based Assessment Objectives

The intent of the Ethics-Based Assessment Objectives is to ensure the ethical deployment of AI systems in compliance with trustworthiness, societal norms, and regulatory requirements.

This includes conducting an ethics assessment, preventing the AI from causing user dependency or manipulation, adhering to data protection laws like GDPR, avoiding unfair biases in AI decision-making, informing users of their interaction with AI and any personal data collection, assessing and mitigating environmental impacts throughout the AI's life cycle, identifying new skill requirements for users, and preventing user de-skilling by providing necessary training.

Objective ET-01: The applicant should perform an ethics-based trustworthiness assessment for any AI-based system developed using ML techniques or incorporating ML models.

Objective ET-02: The applicant should ensure that the AI-based system bears no risk of creating overreliance, attachment, stimulating addictive behaviour, or manipulating the end user’s behaviour.

Objective ET-03: The applicant should comply with national and EU data protection regulations (e.g. GDPR), i.e. involve their Data Protection Officer, consult with their National Data Protection Authority, etc.

Objective ET-04: The applicant should ensure that the creation or reinforcement of unfair bias in the AI-based system, regarding both the data sets and the trained models, is avoided, as far as such unfair bias could have a negative impact on performance and safety.

Objective ET-05: The applicant should ensure that end users are made aware of the fact that they interact with an AI-based system, and, if applicable, whether some personal data is recorded by the system.

Objective ET-06: The applicant should perform an environmental impact analysis, identifying and assessing potential negative impacts of the AI-based system on the environment and human health throughout its life cycle (development, deployment, use, end of life), and define measures to reduce or mitigate these impacts.

Objective ET-07: The applicant should identify the need for new skills for users and end users to interact with and operate the AI-based system, and mitigate possible training gaps (link to Provision ORG-07, Provision ORG-08).

Objective ET-08: The applicant should perform an assessment of the risk of de-skilling of the users and end users and mitigate the identified risk through a training needs analysis and a consequent training activity (link to Provision ORG-07, Provision ORG-08).

Conclusions

Trustworthiness is crucial for AI integration into society, as it addresses ethical, legal, and technical concerns, ensuring that AI technologies benefit humanity while minimizing risks and adverse impacts.

Adhering to these principles is not a matter of regulatory compliance but an objective in encouraging AI technologies that are respectful and enhancing human autonomy, ensure safety, and operate ethically within our societal and environmental contexts.

This is all for today.

See you next week 👋

Disclaimer: The information provided in this newsletter and related resources is intended for informational and educational purposes only. It reflects both researched facts and my personal views. It does not constitute professional advice. Any actions taken based on the content of this newsletter are at the reader's discretion.